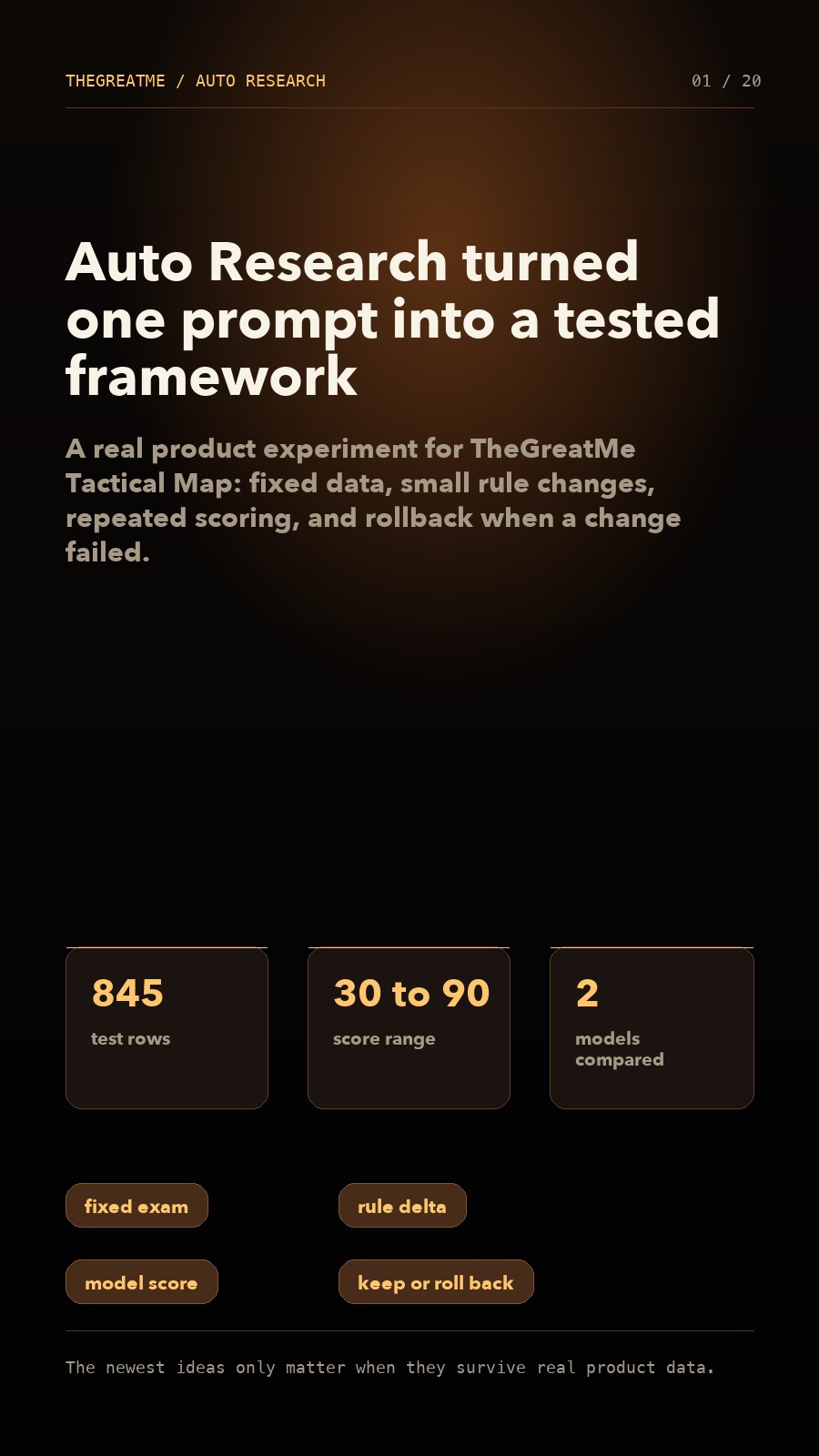

I Ran Nearly 1,000 Auto Research Tests and Turned AI Product Prompts from Guesswork into a Framework

A real product experiment for TheGreatMe's Tactical Map: 845 test rows, 744 real model evaluations, and a jump from the 30s to the 90s.

I Used Auto Research to Run Nearly 1,000 Iterations on My App, and Raised a Prompt from 30 Points to 90

How can an ordinary builder actually use the newest ideas from top AI researchers to improve a real product?

Hi, I'm Qihua.

Recently I have been grinding hard on the Mac version of TheGreatMe. This time I am treating it almost like a piece of craft, so the progress has been a little slower, but it is getting very close.

In the Mac version, I designed a dashboard that feels a bit like something from a spy film. One of my favorite core features inside it is called the Tactical Map.

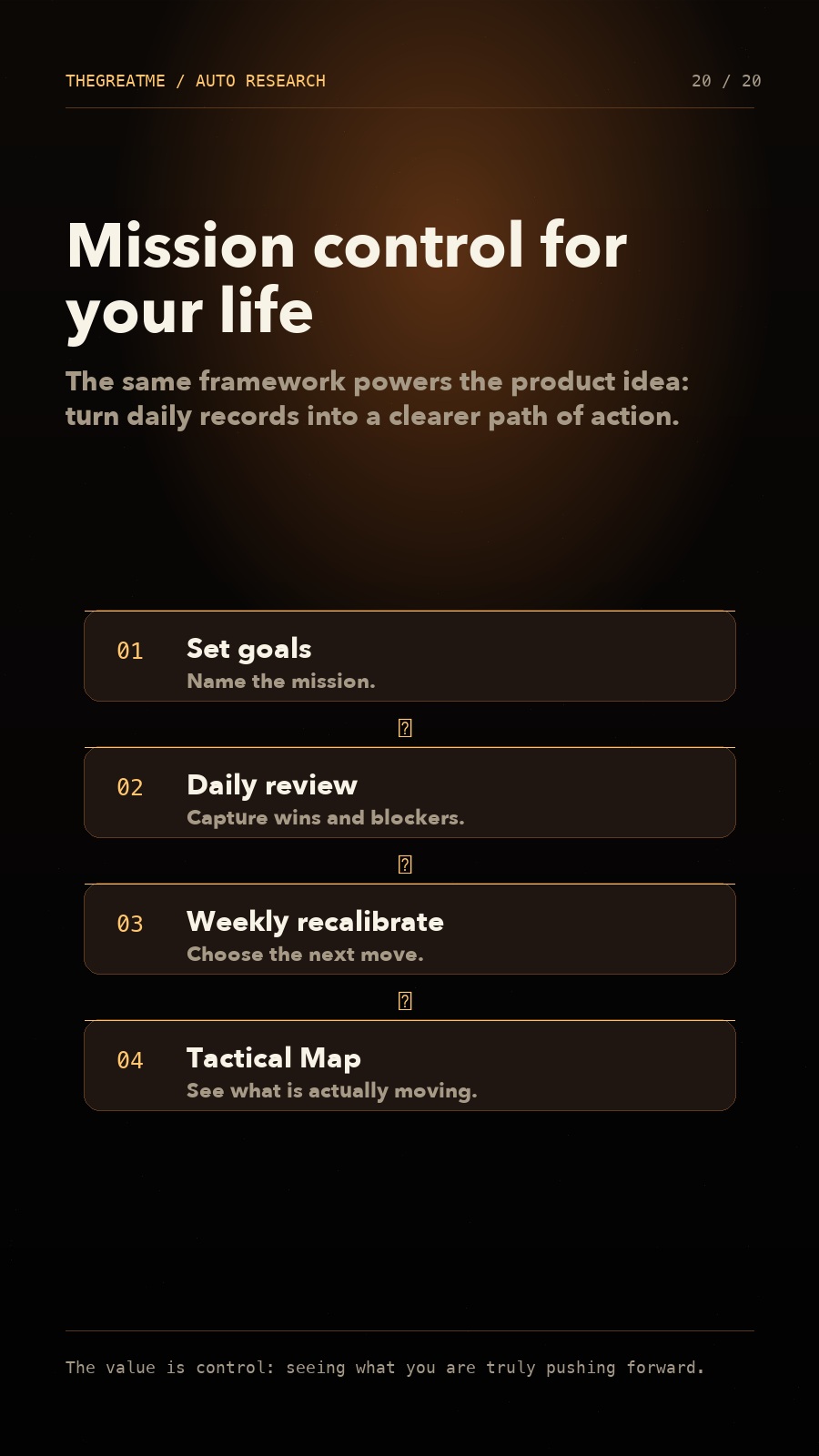

What is the Tactical Map?

TheGreatMe is a personal growth app that helps users set life goals, check in every day, run weekly reviews, and keep iterating on themselves.

The Tactical Map does one simple thing: it reads your daily check-ins, weekly reviews, and conversations with AI, then automatically extracts the events, projects, products, and tasks you are actually moving forward. It turns them into a map of your action path.

In other words, you can see at a glance:

- what your real main storyline has been recently;

- which things are influencing each other;

- how a project has developed over time;

- which nodes are real milestones, and which are only temporary states.

If this feature works well, it is no longer just a record page. It becomes a system that helps you understand your own path of action.

But at the beginning, I was not satisfied with the result at all.

The biggest problem: the extracted items were too fragmented

The early Tactical Map often treated temporary, local details as independent events.

Dates, version numbers, one-off progress states, and even descriptions like "completed 5 items" could all become bubbles on the map. The result was a map full of things, but with no clear main storyline.

The relationship between primary and secondary events was also unstable. Sometimes a major event contained a pile of things that did not really belong to it. Other times, one clear storyline was split into many fragments.

Before this, prompt optimization usually meant looking at one wrong output, then changing the prompt by feel. That easily becomes a tradeoff loop: fixing one problem breaks another rule.

That is why I was never fully happy with the feature. It had potential, but it was not stable enough.

Auto Research gave me a new way to think

A while ago, Andrej Karpathy mentioned an idea I found very interesting: Auto Research.

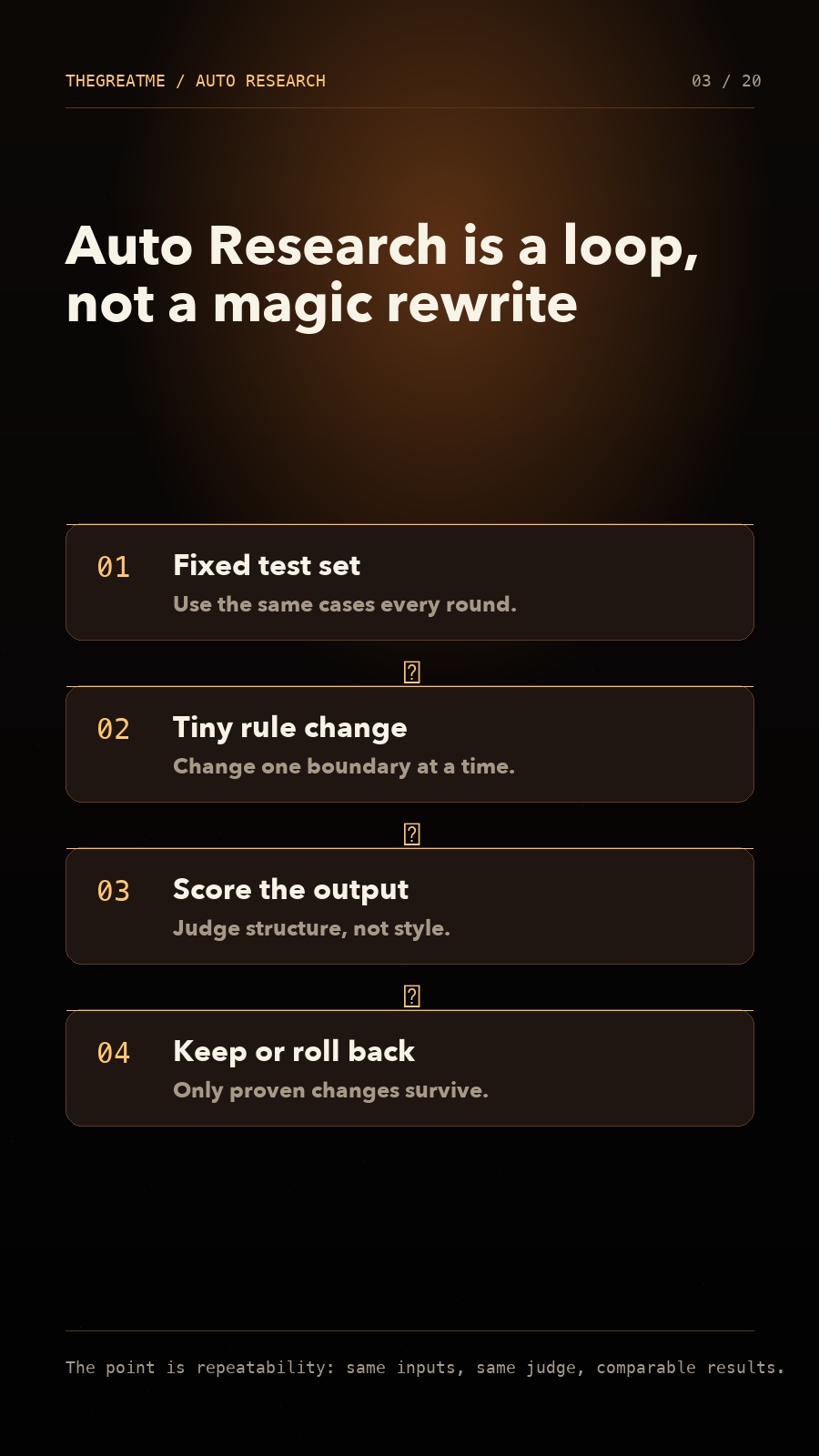

The core idea is not to ask AI to fix everything at once. Instead, you split the task into small pieces, build a stable scoring mechanism, change only a little each time, then test, score, keep useful changes, and discard useless ones.

I liked this idea immediately.

At first, I tried to use it for article improvement, but writing is hard to quantify. What counts as better? More emotional? Better structured? More persuasive? These can be judged, but they are difficult to score consistently.

Then I realized that prompts and rules inside AI products are actually a much better fit for Auto Research.

They contain parts that are easy to quantify, such as whether the JSON format is stable, whether the output follows rules, and whether latency stays acceptable. They also contain parts that are suitable for model-based judgment, such as whether the relationship between primary and secondary events is reasonable, whether event names are clear, and whether the main storyline stands out.

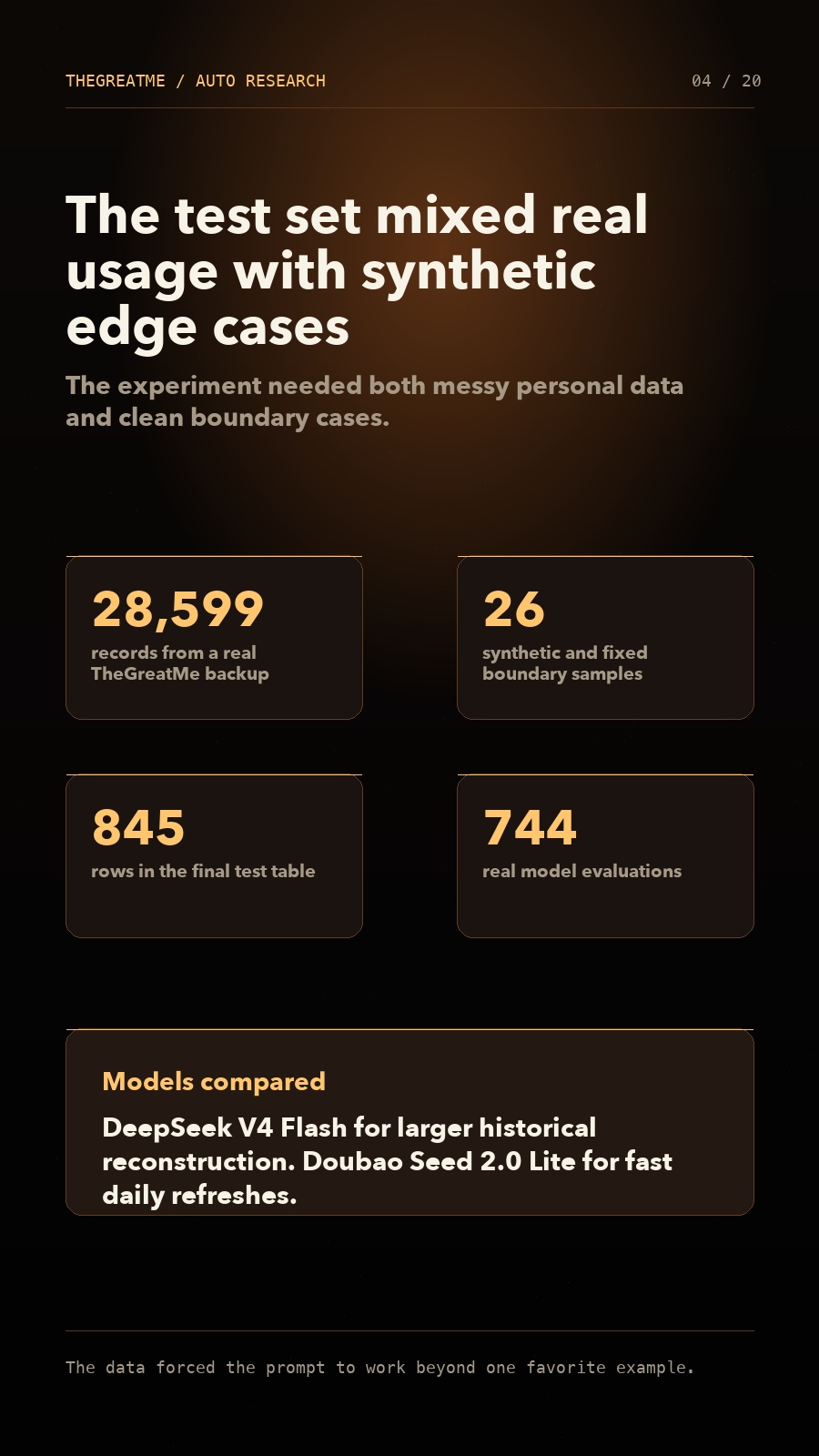

I prepared two kinds of data

For this experiment, I used DeepSeek V4 Flash and Doubao Seed 2.0 Lite 260428 as the main extraction models.

I prepared two kinds of data.

The first was real data from my own TheGreatMe backup ZIP: 28,599 records, 23 attachments, 50 event entities, and 298 event updates. The set included both active events and archived events.

The second was synthetic data. My own usage data is biased toward indie development, so I asked GPT to generate 20 synthetic samples from different professions, then added 6 fixed boundary samples. These covered fragmentation, mainline versus side quests, multiple work streams, manual-title protection, and the rule that deleted or muted events should not be revived.

In the end, I had 845 rows of test records, including 744 real model evaluations. Synthetic samples and real ZIP samples each made up about half of the test set.

This was not a casual "run it a few times and see how it feels" experiment. I put the models through a fixed exam again and again.

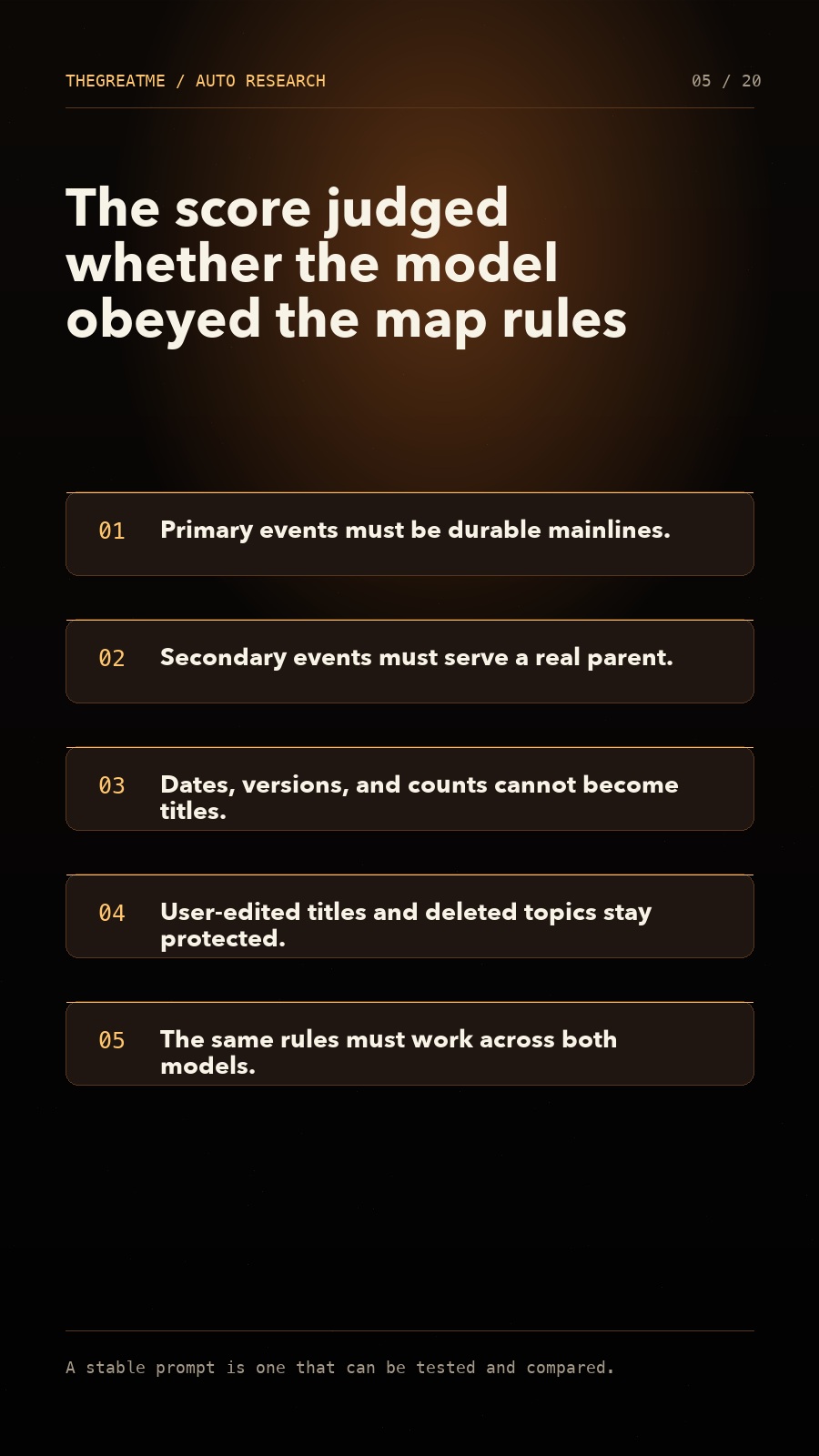

The scoring rule: not whether it writes well, but whether it obeys

I defined several very clear rules for the Tactical Map.

First, primary events must be real mainlines. Not every noun that appears in the source material is allowed to become a primary event.

Second, secondary events must serve the primary event. They cannot be attached randomly.

Third, dates, version numbers, quantities, and one-off states cannot become event titles.

Fourth, manually edited titles must be protected, and deleted or muted events must not be revived by AI.

Fifth, the same rules must work for both DeepSeek and Doubao. A good prompt cannot only be valid for one model.

This part is important. A stable prompt is not one that sounds elegant. It is one that can be tested, reproduced, and compared.

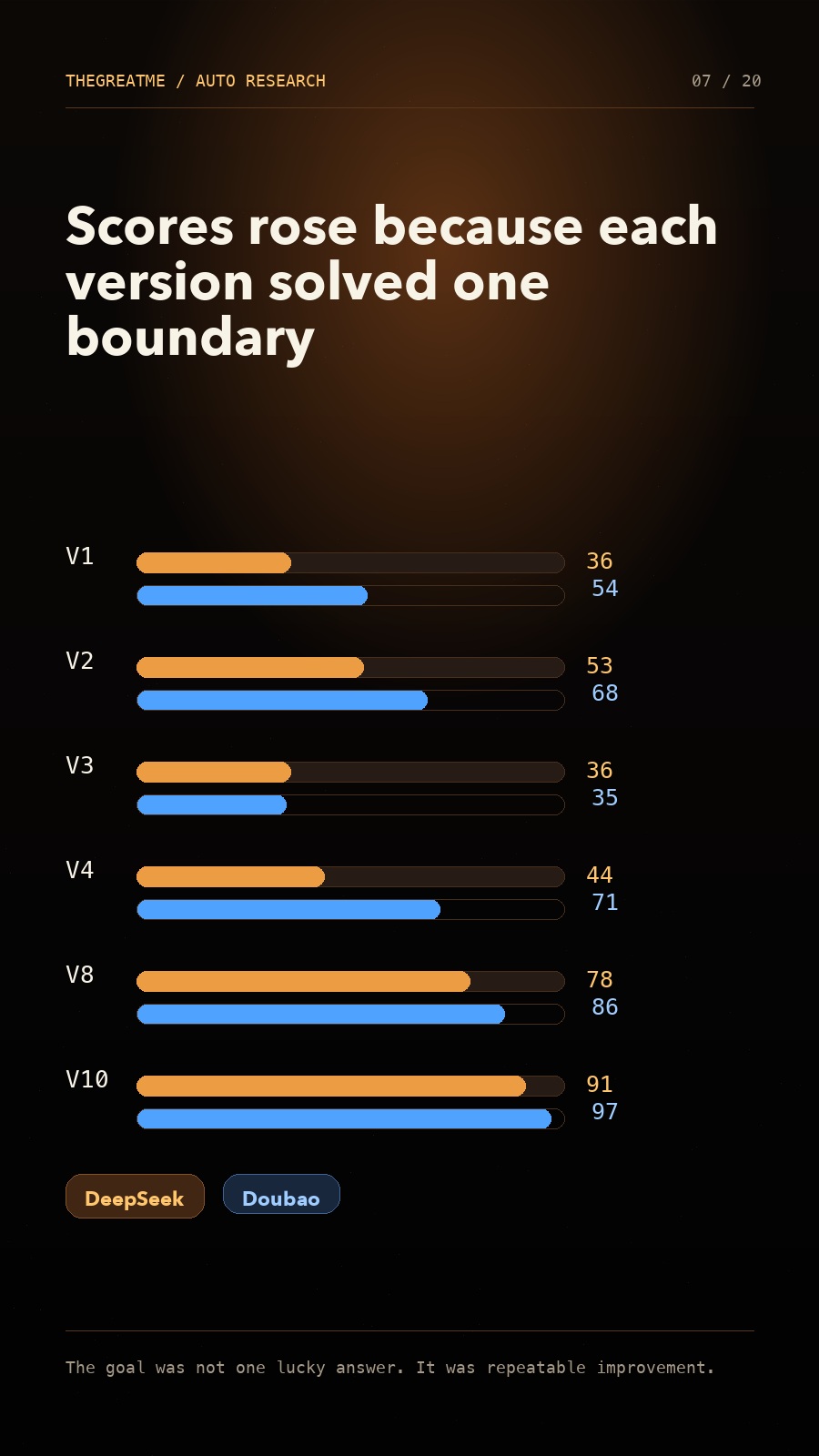

The iteration process: solve one problem at a time

The whole process went through about ten versions.

From V1 to V2, the focus was format stability and the overall expression of the rules. Before anything else, the model needed to stop producing messy output and hold the basic structure.

From V3 to V4, I started working on event granularity. The model had to distinguish what could become an event from what was merely a process description.

From V5 to V6, I focused on edge cases. Dates, version numbers, temporary completion states, and overly broad titles should not become mainlines.

After V7, the truly difficult problem appeared: secondary-event ownership.

Primary events were relatively easier. Once the model understood what counted as a mainline, the score improved quickly. Secondary events were harder, because the model had to decide whether a smaller event belonged under a parent, or whether it should stay independent as a parallel branch.

If the rule was not clear enough, the model tended to throw everything into one large bucket.

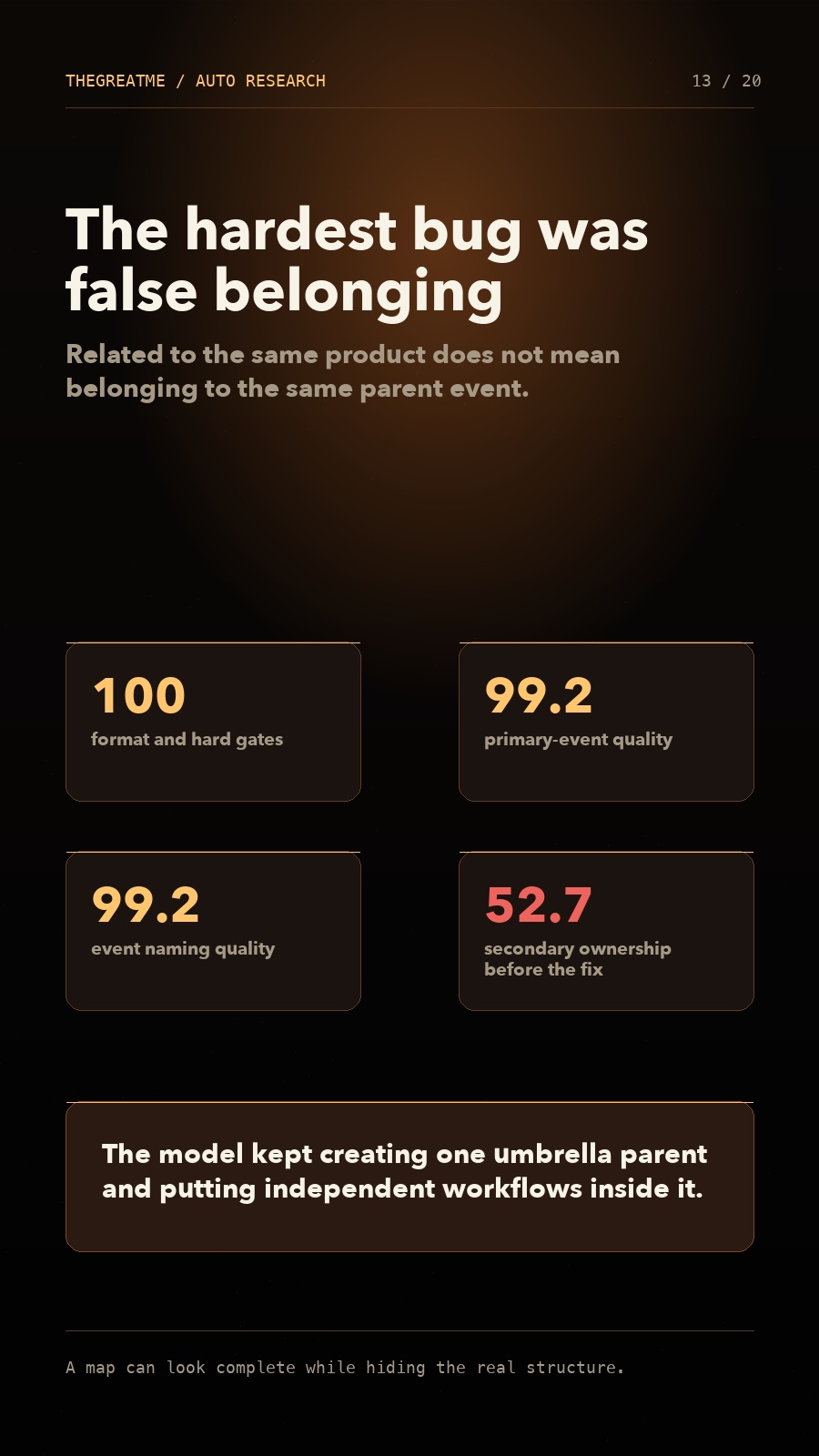

The biggest trap: false belonging

The biggest trap in this experiment was not naming or formatting. It was what I call "false belonging."

For example, one person might be working on app development, content operations, video scripts, and user feedback at the same time. These things are all related to the person, but that does not mean they should all be placed under the same primary event.

The worst case is when AI generates a title that sounds correct, such as "Overall Operations of TheGreatMe App," then puts everything inside it.

It looks complete, but it destroys the structure. When everything belongs to one large bucket, no real relationship has been explained.

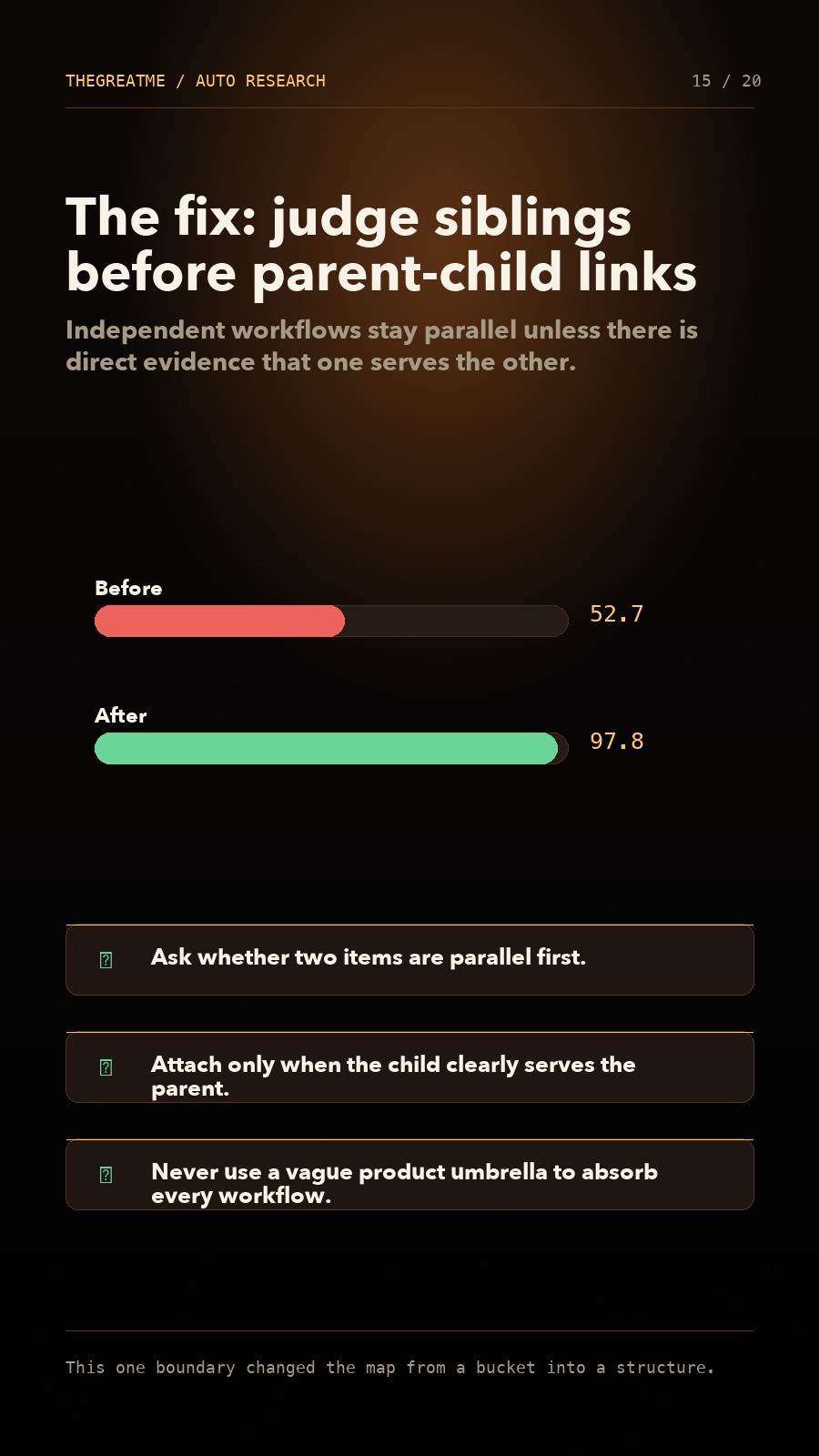

So I added a harder boundary rule: judge sibling relationships first, then parent-child relationships; first ask whether something is an independent workflow, then decide whether it needs to be attached. Two things should not be forced into the same parent just because they are related to the same product.

After this adjustment, the secondary ownership score rose from 52.7 to 97.8.

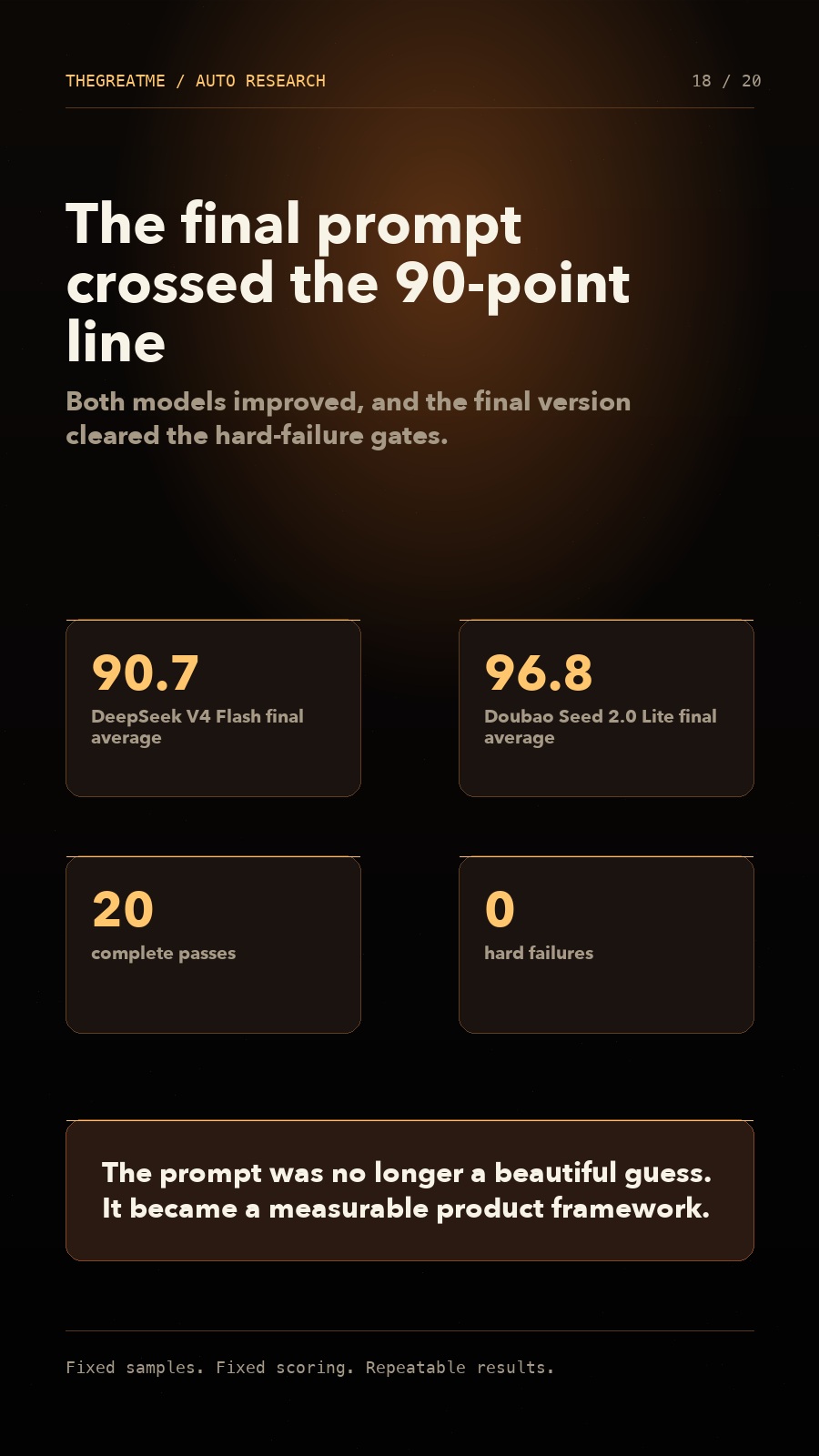

The final result: from 30 points to 90

The final result was very clear.

DeepSeek V4 Flash improved from 36.4 to 90.7.

Doubao Seed 2.0 Lite 260428 improved from 54.0 to 96.8.

This was not a lucky single run. It came from fixed samples, fixed scoring, and repeated testing.

More importantly, the final prompt did not only work for one model. DeepSeek is better for large structural rearrangement, while Doubao is better for daily refreshes and stable extraction. They can play different roles in the same system.

What this really taught me

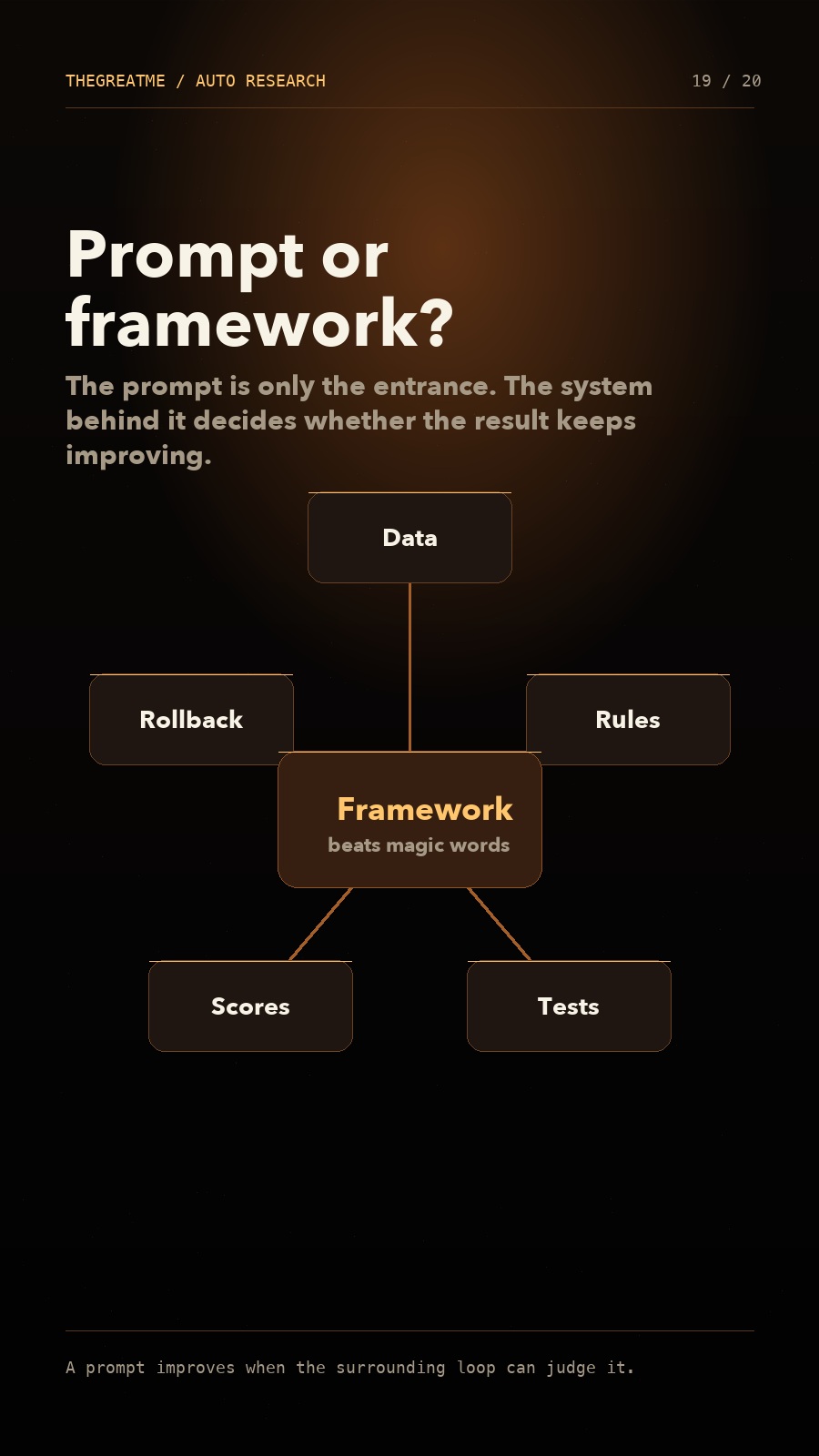

After this experiment, my understanding of prompts changed.

A prompt is not a magic spell.

The important part is not whether one sentence is beautifully written. The important part is whether there is a stable framework behind it: how the data is prepared, how the rules are split, how the tests are run, how the scores are assigned, when a change should be kept, and when it should be rolled back.

So now I prefer to think of prompt work as system engineering.

The prompt is the entrance. The framework is the core.

Back to TheGreatMe

In the end, this still comes back to the product itself.

I do not want TheGreatMe to be an ordinary check-in app, or an AI tool that only chats with you.

I want it to feel more like a system that helps you complete the mission of your life.

You write down your goals, review your wins and blockers every day, and recalibrate your next step every week. Behind the scenes, AI keeps organizing your action path, extracting key events, identifying mainlines and side branches, and turning the process of moving your life forward into an increasingly clear map.

That is why I am building the Mac dashboard and the Tactical Map.

When a person can clearly see what they are pushing forward, what happened in the past few weeks, which things are influencing each other, and where the next move should go, they are no longer just "recording life." They begin to gain a real sense of control.

Using Auto Research to improve the Tactical Map was not just a prompt experiment for me.

It made me more certain of one thing: ordinary builders absolutely can use the newest ideas from top AI researchers. The key is not to leave those ideas as social-media concepts. The key is to put them into your own real product, real problem, and real data, then let them get better one round at a time.

That is what I wanted to share this time.